HIPAA-compliant AI for law firms refers to AI tools that satisfy the Health Insurance Portability and Accountability Act’s requirements for handling protected health information (PHI). In practice, that means signed Business Associate Agreements, encryption controls, data retention limits, and documented safeguards. For litigation attorneys who process medical records, draft demand letters, or summarize depositions, this is not an abstract compliance exercise. It determines which AI tools you can legally use with client medical data and which ones put your firm at risk.

At DocuLex.ai, our founder Jason L. Melancon has spent 20+ years in civil litigation handling the same PHI that this guide addresses. We built our platform with a Business Associate Agreement, zero medical data retention after analysis, and SSE-KMS encryption on AWS infrastructure because we understood these requirements from the practitioner side first. This guide covers what HIPAA actually requires when your firm introduces AI into workflows that touch medical records, what the ABA and state bars are saying about it, and how to implement compliant processes that hold up under scrutiny.

How HIPAA Applies to Law Firms Using AI

HIPAA does not automatically regulate every law firm that possesses medical records. The statute defines “covered entities” as health plans, health care clearinghouses, and certain health care providers. Most law firms are not covered entities. The more common regulatory hook is business associate status.

Under 45 C.F.R. § 160.103, a “business associate” includes any person or entity that provides legal services to or for a covered entity when those services involve access to PHI. HHS gives the example of an attorney whose legal services to a health plan involve access to protected health information. In practice, defense-side counsel representing a hospital, insurer, or health plan is the clearest HIPAA business associate scenario.

Where Plaintiff-Side PI Firms Fit

For plaintiff-side personal injury firms, the HIPAA picture is narrower than many articles suggest. When a PI firm obtains medical records through a client’s authorization or through litigation discovery, HIPAA governs the provider’s disclosure of those records more directly than the plaintiff firm’s downstream handling. The firm’s obligations typically run through Model Rule 1.6 confidentiality duties, court orders, contractual obligations, state privacy laws, and cybersecurity best practices.

That said, there are scenarios where plaintiff-side counsel does become a business associate, such as representing a covered entity (like a hospital or health plan) as a plaintiff. And even when HIPAA does not directly regulate a plaintiff firm’s handling of records, the security and vendor-diligence standards HIPAA requires are still the benchmark. Bar associations increasingly expect the same level of care regardless of whether HIPAA technically applies.

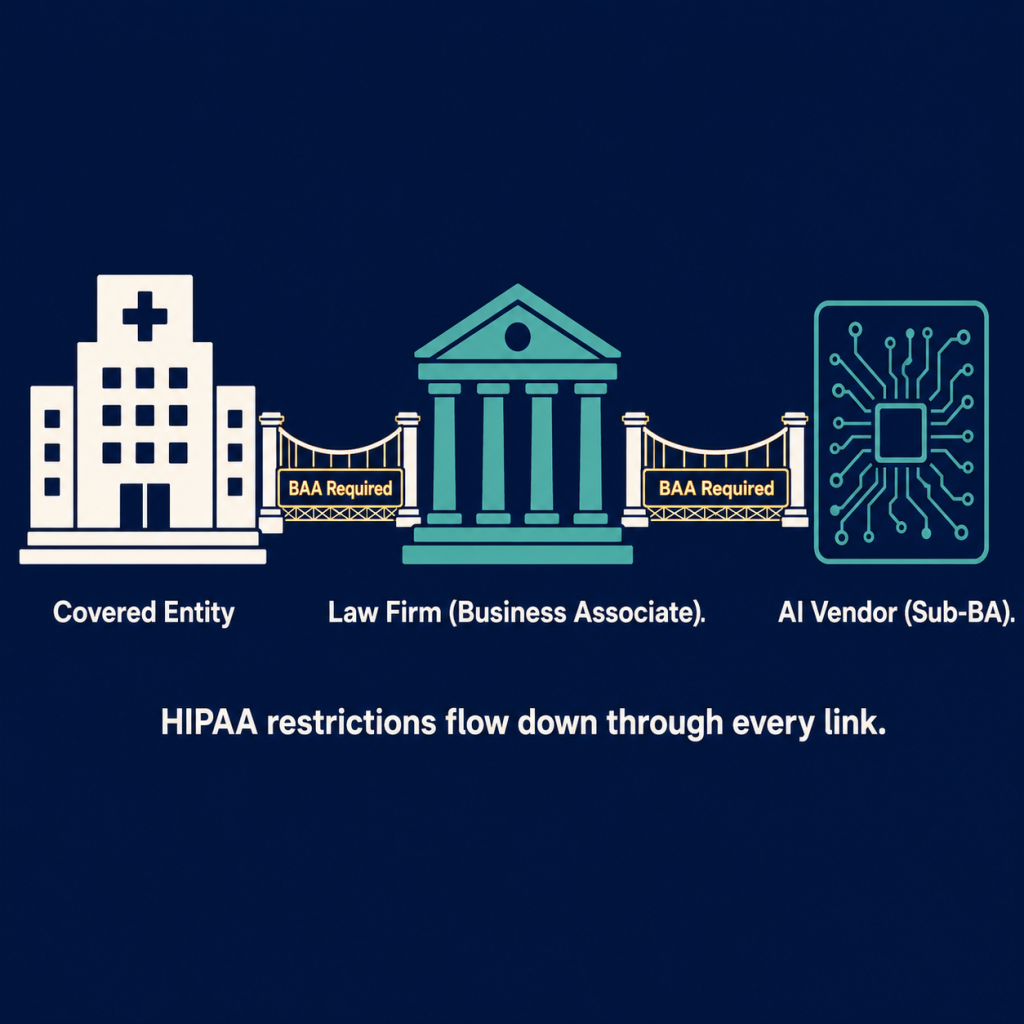

The AI Vendor as Downstream Business Associate

When a law firm acting as a business associate introduces AI into a HIPAA-regulated workflow, the AI vendor likely falls under the subcontractor or downstream business associate analysis. 45 C.F.R. § 160.103 expressly includes subcontractors that create, receive, maintain, or transmit PHI on behalf of a business associate. HHS FAQ 709 names litigation support personnel and file managers as downstream recipients who need the same restrictions as the primary business associate.

If your AI vendor receives or maintains your firm’s PHI to perform the workflow, the same logic applies: the vendor needs a BAA, and the restrictions need to flow down.

What a Business Associate Agreement Actually Requires

A BAA is not a marketing badge. It is a detailed permission-and-obligation document with specific required provisions laid out in HHS sample BAA guidance.

HHS requires a compliant BAA to include at least ten core elements:

- Defined permitted and required uses and disclosures of PHI

- Prohibition on unauthorized further disclosure

- Requirement for appropriate safeguards

- Requirement for breach reporting

- Support for access, amendment, and accounting obligations

- Requirement for HHS access to records

- Return or destruction of PHI at termination (when feasible)

- Flow-down of all restrictions to subcontractors

- Permission for covered entity to terminate for material breach

- Specification of the BA’s direct obligations under HIPAA

That last point matters: a BAA does not eliminate the AI vendor’s own HIPAA liability. HHS states that business associates are directly liable for impermissible uses and disclosures, failure to comply with Security Rule safeguards for electronic PHI, and failure to meet breach notification obligations. OCR can enforce the underlying rules against the vendor directly, regardless of what the BAA says.

What This Means for Your Vendor Evaluation

When an AI vendor says they “support HIPAA compliance,” ask for the BAA itself. Review it against HHS’s sample provisions. Look specifically for subcontractor flow-down language (does the vendor use third-party model providers?), return/destroy provisions, breach notification timing, and whether the BAA actually covers the specific services you plan to use.

If a vendor will not sign a BAA, that is a stop sign. HHS requires covered entities to obtain written satisfactory assurances before engaging a business associate to handle PHI. Google’s own documentation states that customers without a signed BAA must not use PHI in Google Workspace or Cloud Identity services. And OCR has enforced this: North Memorial Health Care paid $1.55 million in a settlement that centered on the absence of a BAA with a major contractor.

Consumer AI vs. Enterprise AI: Where the Compliance Risk Lives

The distinction between consumer and enterprise AI is where most compliance failures happen. An attorney who opens a free ChatGPT session and pastes a client’s medical records into the prompt has made a fundamentally different decision than an attorney using an enterprise platform with a signed BAA and no-training commitments.

Here is how the differentiators break down across major providers:

| Feature | Consumer/Free AI | Enterprise/API AI |

| Business Associate Agreement | Not available | Available (must be signed separately) |

| Data used for model training | Typically yes, or opt-out required | Contractually excluded |

| Data retention | Varies; may retain even with history “off” | Controlled by BAA and retention policies |

| Encryption standards | Basic | Enterprise-grade (at rest and in transit) |

| Tenant isolation | None | Per-organization isolation |

| Audit logging | Limited or none | Full audit trails |

The gaps are more granular than most attorneys realize. Google’s consumer Gemini Apps documentation states that even when Gemini Apps Activity is turned off, conversations are still saved with the account for up to 72 hours. Microsoft’s enterprise Copilot documentation includes a notable caveat: HIPAA compliance does not apply to web-search queries because those queries fall outside the scope of the Data Processing Agreement and BAA.

These details matter because “we turned off history” or “it’s the enterprise version” can be incomplete answers if you have not traced the actual data path end to end.

How Major Providers Handle PHI

OpenAI states that using its API platform with PHI requires a BAA to be in place first. Microsoft says its 365 Copilot and Copilot Chat operate under enterprise data protection terms where prompts, responses, and Microsoft Graph data are not used to train foundation models, and data is encrypted at rest and in transit. Google Workspace says customer data is not used to train underlying models outside Workspace without permission, and its Gemini Enterprise materials confirm that customer prompts, outputs, and training data are not used to train Google models or other customers’ models.

The consistent theme across all three: enterprise AI compliance is a shared-responsibility model. There is no HHS-recognized certification for “HIPAA compliant.” Google Cloud’s own HIPAA materials say this explicitly. The vendor can support compliance, but the firm’s own configuration, governance, and workflow design carry the rest of the obligation.

HIPAA’s Minimum Necessary Rule and AI Workflows

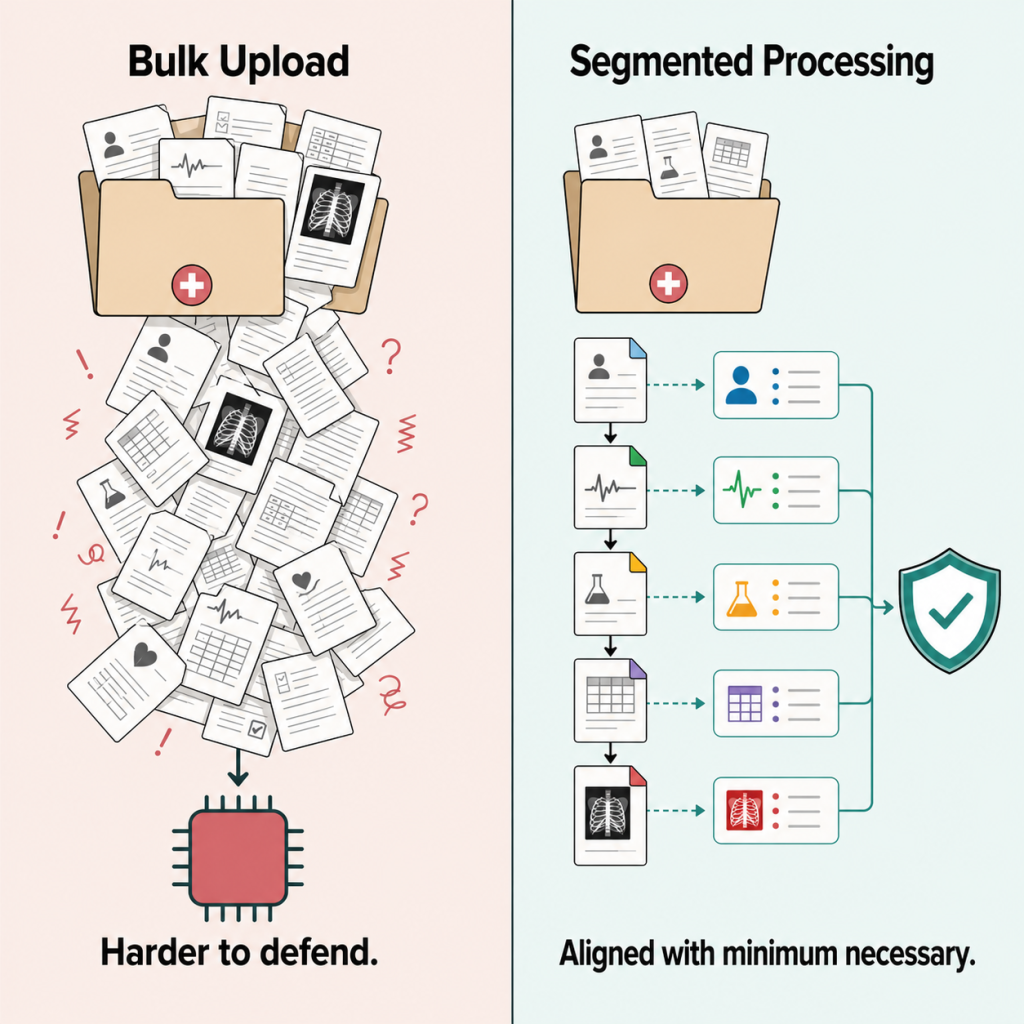

The minimum necessary standard is one of OCR’s most frequently enforced HIPAA provisions, and it creates a specific challenge for AI workflows that ingest entire case files.

Under 45 C.F.R. § 164.514(d), covered entities must identify who needs access to PHI, define the categories of PHI each class of person needs, and limit routine disclosures to what is reasonably necessary for the purpose. HHS’s litigation-specific FAQ (FAQ 705) applies the same principle to attorneys handling PHI: a lawyer-business associate must make reasonable efforts to limit PHI to the minimum necessary and may need to de-identify or remove direct identifiers depending on the circumstances.

OCR has not published AI-specific guidance on how minimum necessary applies to bulk case-file ingestion. But the existing framework creates a clear inference: “upload the full file and let the model figure it out” is harder to defend than workflows that segment data by purpose, restrict ingestion to the records actually needed for a specific task, and limit whole-file processing to tools that are contractually cleared for that use.

Practical Minimum Necessary Design for AI

If the task is generating a treatment summary paragraph for a demand letter, the most defensible approach is to segment the medical records to the relevant treatment dates, remove identifiers where feasible for the specific task, and use a platform that processes records in structured segments rather than feeding the entire file into a single prompt.

At DocuLex.ai, we designed our AI medical records processing to address this directly. Medical records are processed visit-by-visit in structured segments, with each piece of data tagged and embedded separately rather than ingested as a single bulk file. This approach aligns with the minimum necessary principle by limiting what the AI processes to what is actually needed for each specific output. The same segmented design applies to our legal AI chatbot, which retrieves case-specific answers from structured, indexed data rather than reprocessing entire files with each query.

What the ABA and State Bars Say About AI and Client Data

Even outside of HIPAA’s regulatory scope, attorney ethics rules create independent obligations around AI and confidential client data. Several opinions lay out the framework.

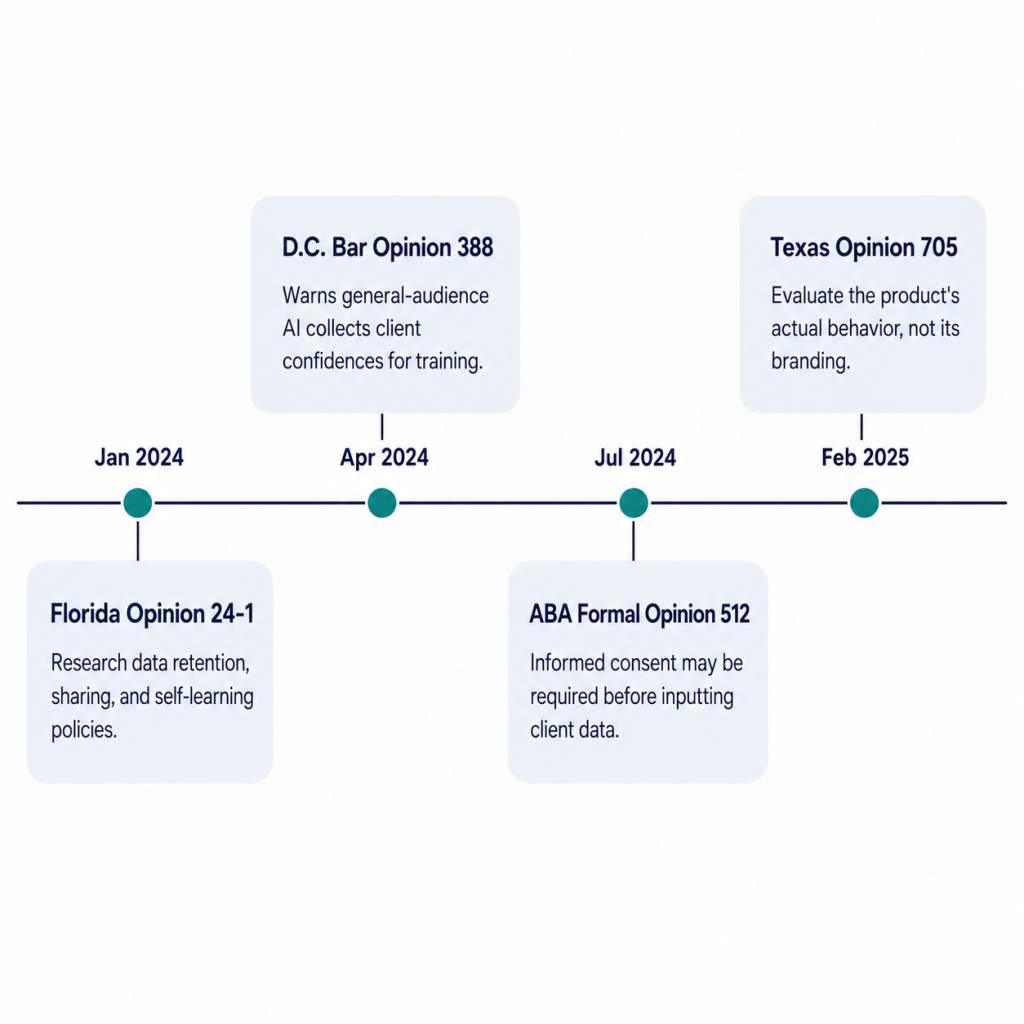

ABA Formal Opinion 512 (July 2024)

ABA Formal Opinion 512 is the foundational national ethics source for legal AI. The opinion addresses duties of competence, confidentiality, communication, supervision, candor to the tribunal, and reasonable fees as they apply to generative AI use.

The confidentiality guidance is the most directly relevant to HIPAA concerns: for many of today’s self-learning tools, if a lawyer proposes to input information relating to a representation, informed client consent may be required first. The opinion specifies that boilerplate engagement-letter language is not sufficient when informed consent is needed, and it directs lawyers to read the tool’s terms, privacy policy, and related vendor documents before use.

Florida Ethics Opinion 24-1 (January 2024)

Florida gives a practical checklist that maps onto medical record AI workflows. It tells lawyers to research the program’s policies on three specific topics before using it: data retention, data sharing, and self-learning. Those are the same three vectors that determine whether an AI tool is safe for PHI.

Texas Opinion 705 (February 2025)

Texas focuses on the actual behavior of the product, not its branding. The opinion warns that many AI tools invite conversational prompts that may expose privileged mental impressions or confidential facts, and that self-learning systems may store and later reveal inputs. If the lawyer is not reasonably satisfied the tool will protect the information, the lawyer should avoid inputting confidential information without client consultation and consent.

D.C. Bar Opinion 388 (April 2024)

D.C. draws the sharpest line between consumer and enterprise AI. It warns that many general-audience AI products are designed to collect and use user information, including client confidences, for training and transmission to future users. The opinion advises lawyers to either choose a product that can be trusted with confidential information, negotiate improved terms, or input only non-confidential data. It specifically identifies zero data retention as a meaningful product-level distinction.

Where HIPAA and Ethics Rules Meet

These opinions converge on the same framework HIPAA requires: know how your tool handles data, get it in writing, limit what you feed it, and supervise the output. For firms that handle PHI, HIPAA and ethics rules are complementary obligations. A firm that satisfies HIPAA’s vendor-management and technical-safeguard requirements is well-positioned on the ethics side too.

HIPAA Enforcement: Penalties and Recent Cases

HIPAA enforcement is not theoretical, and it is not limited to hospitals. OCR actively enforces against business associates, and the penalties are substantial enough to make vendor diligence a financial imperative.

Current Penalty Tiers (Updated January 2026)

The January 2026 inflation adjustment set the current HIPAA civil penalty tiers at:

| Tier | Minimum Per Violation | Maximum Per Violation | Annual Cap |

| Tier 1 (Did Not Know) | $145 | $73,011 | $2,190,294 |

| Tier 2 (Reasonable Cause) | $1,461 | $73,011 | $2,190,294 |

| Tier 3 (Willful Neglect, Corrected) | $14,602 | $73,011 | $2,190,294 |

| Tier 4 (Willful Neglect, Not Corrected) | $73,011 | $2,190,294 | $2,190,294 |

Enforcement Volume

As of October 2024, OCR reported receiving over 374,321 HIPAA complaints, initiating over 1,193 compliance reviews, and obtaining settlements or civil monetary penalties in 152 cases totaling $144,878,972. Minimum necessary violations are among OCR’s top five enforcement categories.

Business Associate Enforcement Cases

OCR has repeatedly enforced against business associates and their contractor relationships:

| Entity | Year | Amount | Issue |

| North Memorial Health Care | 2016 | $1,550,000 | Missing BAA with major contractor |

| Catholic Health Care Services | 2017 | $650,000 | No risk analysis, unencrypted device breach |

| MedEvolve (BA) | 2023 | $350,000 | Missing subcontractor BAA, unsecured server exposed 230,572 individuals |

| Doctors’ Management Services (BA) | 2023 | $100,000 | Ransomware, failed risk analysis, 206,695 affected |

| BST & Co. CPAs (BA) | 2025 | Settlement | Ransomware, risk analysis failures |

| MMG Fusion (BA) | 2026 | Settlement | Breach notification failures, 15 million individuals affected |

One note worth adding: OCR’s public enforcement record is dominated by healthcare providers and non-legal business associates. Settlements naming outside law firms as respondents are rare. But the enforcement pattern against software vendors, IT providers, and other service-industry BAs is directly relevant to how OCR would likely approach an AI vendor that mishandled PHI in a legal workflow.

The Proposed Security Rule Update and What It Means for AI

HHS issued the HIPAA Security Rule Notice of Proposed Rulemaking (NPRM) on December 27, 2024, with Federal Register publication on January 6, 2025. The current Security Rule remains in effect during the rulemaking process. As of the Spring 2025 Unified Agenda, HHS listed a target final action date of May 2026, though the rule had not yet been finalized as of the sources reviewed for this guide.

What the NPRM Would Change

The proposed changes would raise the documentation and control requirements for any organization handling electronic PHI, including AI tools:

- Remove the current “required vs. addressable” distinction for implementation specifications

- Require written documentation of all policies, procedures, plans, and analyses

- Require an annually updated technology asset inventory and network map

- Require greater specificity in risk analysis

- Require annual compliance audits

- Require encryption of ePHI at rest and in transit (with limited exceptions)

- Require multi-factor authentication (with limited exceptions)

- Require vulnerability scanning at least every six months and annual penetration testing

- Require business associates to verify annually to covered entities that required technical safeguards have been deployed, through written analysis and certification

Why This Matters for AI Tools Now

Even though the NPRM is not yet finalized, the direction is clear: OCR is moving toward explicit inventory, mapping, and control-verification expectations that directly apply to AI vendor governance. Firms that start documenting their AI systems now, including what tools are used, by whom, for what matter types, with what data classes, with what retention settings, and under what contracts, will be well-positioned whether the final rule closely mirrors the NPRM or takes a modified form.

How to Evaluate an AI Vendor for HIPAA Compliance

Based on the regulatory framework, bar guidance, and enforcement record outlined above, here are the specific questions to ask every AI vendor before using their tool with PHI:

Business Associate Agreement:

- Will you sign a BAA? (If no, stop here for PHI use)

- Does your BAA cover the specific services we plan to use?

- Does the BAA include subcontractor flow-down provisions?

Data handling:

- Do you use subcontractors that access PHI? If so, do they have BAAs?

- What are your default retention and deletion policies for prompts, outputs, and uploaded files?

- Are prompts, outputs, or retrieved files used for model training?

- What happens to data at contract termination?

Technical controls:

- What encryption standards do you use at rest and in transit?

- Is our firm’s data isolated from other tenants?

- What audit logging and access controls are in place?

- What are your incident notification timelines?

Verification:

- Can you provide written documentation of your security controls?

- Do you conduct regular penetration testing and vulnerability assessments?

- Will you provide annual compliance verification?

The answers to these questions determine whether a vendor supports HIPAA compliance or merely claims it. A platform that answers these concretely, the way DocuLex.ai does through our data security infrastructure, is substantively different from a vendor that redirects to a general privacy policy.

Implementation Checklist for HIPAA-Compliant AI Workflows

Putting this all together, here is a practical implementation framework drawn from HHS guidance, bar ethics opinions, and the proposed Security Rule:

1. Classify the workflow. Are you acting for a covered entity (making this a direct HIPAA BA/sub-BA problem), or are you handling PHI only as plaintiff-side counsel for an injured client (making this primarily an ethics, confidentiality, and vendor-risk problem)? The answer changes the regulatory analysis but should not change the practical security standard.

2. Vet every AI vendor in writing. Ask the questions listed above. Get written responses. Review the BAA against HHS’s sample provisions. Check subcontractor disclosures. Do not treat a vendor’s marketing page as a substitute for reading the actual terms of service and privacy policy.

3. Design prompts and ingestion around minimum necessary. If the task is a treatment summary for a demand, segment the file, remove identifiers where feasible, and restrict whole-file ingestion to tasks that genuinely require it and to tools contractually cleared for that use.

4. Implement written supervisory rules. Cover lawyers, paralegals, and intake or records staff. Include prohibited-use examples (no PHI in non-approved tools), output-review expectations, escalation paths for suspected inaccuracies, and client consent rules where self-learning products are still in use. ABA Opinion 512 and multiple state opinions make supervision and training explicit obligations.

5. Establish a firm-wide rule on unapproved tools. The biggest compliance risk is not the platform you chose. It is the paralegal who pastes records into a free AI tool because it was faster. Approved tools like a dedicated AI paralegal platform with proper BAAs should be the only option for PHI workflows. Written policy, training, and periodic reminders are the only reliable controls.

6. Keep an internal AI asset inventory. Document what tools are used, by whom, for what matter types, with what data classes, with what retention settings, and under what contracts. Whether or not the NPRM finalizes in its proposed form, this documentation is already defensible practice and will position your firm ahead of any final rule requirements.

7. Review and update annually. HIPAA compliance is not a one-time event. Vendor terms change. AI products add features. Staff workflows evolve. Annual review of your AI inventory, vendor agreements, and written policies keeps your compliance posture current.

Frequently Asked Questions

Does Every Law Firm That Handles Medical Records Need To Comply With HIPAA?

Not necessarily. HIPAA’s direct regulatory reach extends to covered entities and their business associates. A law firm becomes a business associate when it provides legal services to or for a covered entity (like a hospital or health plan) and those services involve access to PHI. Plaintiff-side PI firms that receive records through client authorizations or discovery are typically governed more directly by ethics rules, court orders, and state privacy laws. That said, the security standards HIPAA requires are still the benchmark that bar associations reference when evaluating attorney competence in data protection.

What Happens If My Firm Uses a Consumer AI Tool With Client Medical Records?

You may be creating an unauthorized disclosure of PHI if your firm is a business associate, and you are almost certainly violating your confidentiality obligations under Model Rule 1.6 regardless. Consumer AI tools typically lack BAAs, may use your inputs for model training, and often retain data even when history is turned off. ABA Formal Opinion 512 says informed client consent may be required before inputting client information into self-learning AI tools, and D.C. Bar Opinion 388 warns that general-audience AI products may collect client confidences for training and transmission to future users.

Is “HIPAA Compliant” a Certification I Can Look For?

No. There is no HHS-recognized certification for HIPAA compliance. When a vendor says “HIPAA compliant,” they are making a self-assessment claim. Google Cloud’s own HIPAA materials state this explicitly. What you can verify is whether the vendor will sign a BAA, what specific technical controls they implement, and whether their terms of service actually support compliant use of PHI.

What Should I Do If My Current AI Vendor Will Not Sign a BAA?

Stop using that vendor for any workflow involving PHI. HHS requires written satisfactory assurances before a business associate can share PHI with a downstream entity. No BAA means no authorized PHI use. This is the clearest bright line in the entire compliance framework.

How Does the Proposed Security Rule NPRM Affect My Firm’s AI Use?

The current Security Rule remains in effect while the NPRM is pending. But the NPRM signals where OCR is heading: toward mandatory technology asset inventories, annual compliance audits, encryption requirements, multi-factor authentication, and annual BA verification. Firms that start documenting their AI tools and security controls now will be ahead of whatever the final rule requires.

Can I Use AI To Process Medical Records If I Am Not a HIPAA Business Associate?

Yes, but your obligations do not disappear. Model Rule 1.6 requires competent safeguards for all confidential client information, and state bar opinions from Florida, Texas, and D.C. all require lawyers to understand how their AI tools handle data before using them with client information. The practical vendor-diligence steps are the same whether your firm’s obligations run through HIPAA, ethics rules, or both.

Take the Next Step

If your firm handles medical records in litigation and you are evaluating AI tools, the compliance framework matters as much as the feature set. At DocuLex.ai, we built our platform around these requirements because our founder practices in the same space you do. Our BAA with OpenAI, zero medical data retention policy, SSE-KMS encryption, and visit-by-visit medical records processing are the direct result of a practicing attorney designing a system he would trust with his own clients’ PHI. Schedule a free demo to see how compliant AI workflows work in practice.