Law firms that handle medical records (personal injury, medical malpractice, workers’ comp, mass tort) qualify as Business Associates under HIPAA, which means the AI tools they use have to meet the same compliance bar as hospitals. The best HIPAA-compliant AI tools for law firms in 2026 include DocuLex for litigation document automation and medical records processing, enterprise LLM platforms like ChatGPT Enterprise, Azure OpenAI, Google Vertex AI, Amazon Bedrock, and Anthropic Claude that will sign BAAs, and transcription services like Sonix, Rev Enterprise, and Otter.ai Enterprise with HIPAA-specific plans.

At DocuLex, we built our platform for civil litigation attorneys, with roughly 85% of our focus on personal injury firms that process medical records daily. Because PHI moves through our system constantly, HIPAA compliance shapes the underlying AWS architecture. Our experience working with PI firms shows that most get tripped up on the same compliance details: using consumer-grade AI without a BAA, overlooking subprocessor coverage, and assuming encryption alone satisfies the Security Rule.

Below, we break down what makes an AI tool HIPAA-compliant, the tools we see working for law firms in 2026, and how to evaluate a vendor before you let it anywhere near client PHI.

Why HIPAA Compliance Matters When Law Firms Use AI

When a personal injury firm orders medical records, reviews treatment histories, or drafts demand letters from clinical notes, it handles protected health information (PHI). HIPAA treats the firm as a Business Associate of any covered entity that supplies those records.

That status carries real weight:

- The firm must sign BAAs with covered entities and with its own subprocessors, including AI vendors

- PHI has to stay encrypted in transit and at rest

- Access has to be limited by role, following the Minimum Necessary Standard

- Every interaction with PHI should be logged and auditable

Civil penalties escalate quickly for willful neglect, with per-violation fines that can reach six figures and annual caps that climb into the millions. The HHS Office for Civil Rights publishes the current penalty tiers and enforcement history.

The risk with AI is that a single prompt containing a client’s name, diagnosis, and treatment history pasted into a consumer chatbot can constitute an unauthorized disclosure. Non-compliant tools can trigger HHS fines, state bar complaints, malpractice exposure, and damaged relationships with referring attorneys. The underlying attorney-client privilege can also come into question.

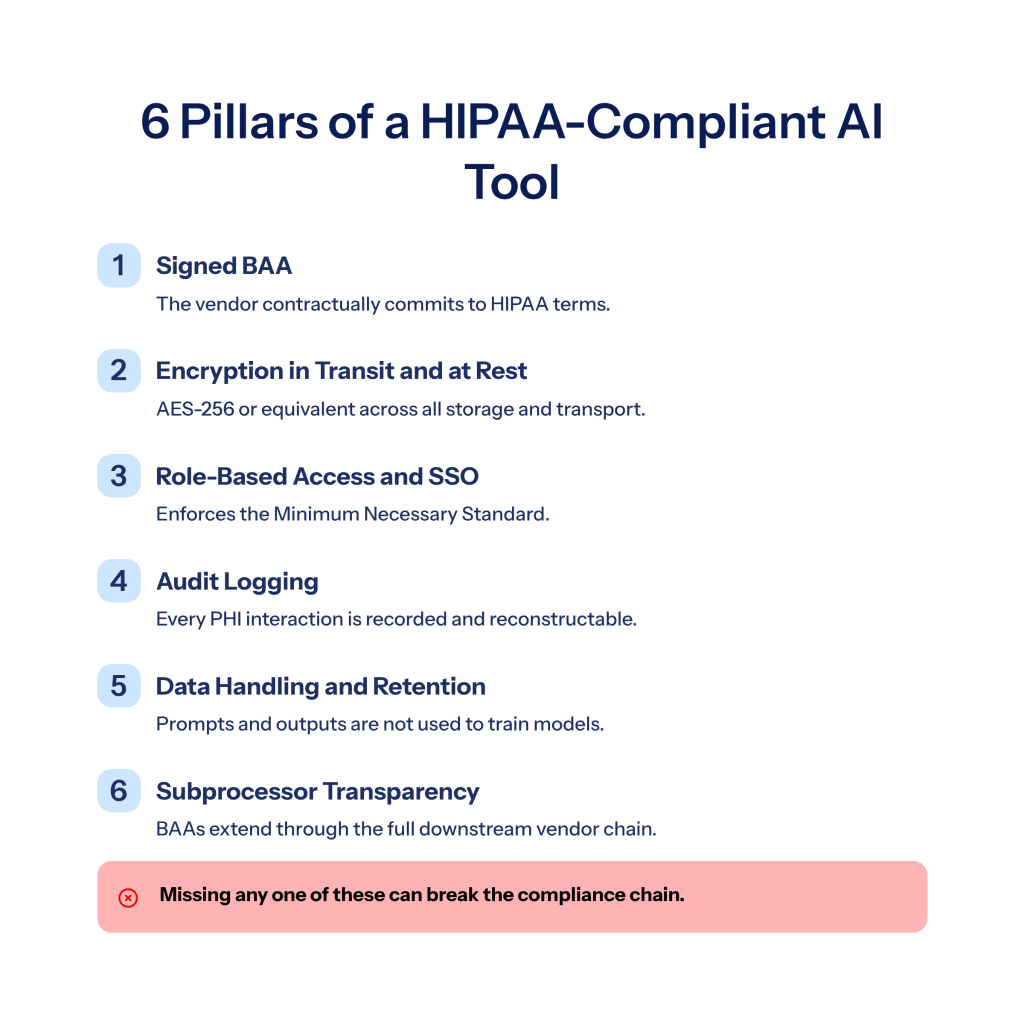

What Makes an AI Tool HIPAA-Compliant

No software is “HIPAA-certified” because HIPAA doesn’t issue certifications. A tool is HIPAA-compliant when the vendor will sign a BAA and when the platform has the technical and administrative controls the Security Rule requires.

Signed Business Associate Agreement (BAA)

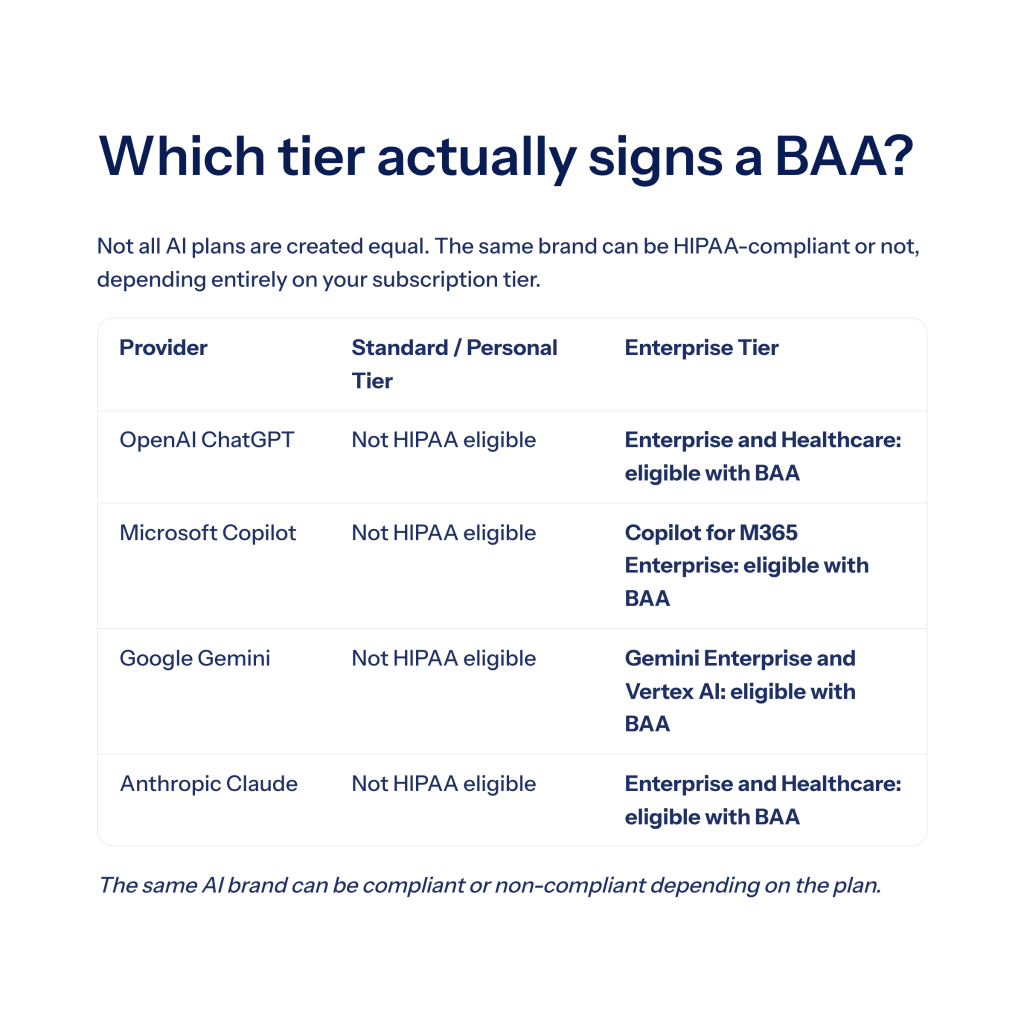

The BAA is non-negotiable. It defines how the vendor handles PHI, who its subprocessors are, breach notification timelines, and termination procedures. Any AI vendor that will not sign a BAA cannot handle PHI. This rules out most consumer-tier AI subscriptions, including standard ChatGPT, free Gemini, and personal Copilot.

Encryption in Transit and at Rest

AES-256 is the current standard. The AI platform should encrypt PHI when it enters the system, while it sits in storage, and whenever it moves between services. Server-side encryption with customer-managed keys (like SSE-KMS on AWS) gives firms stronger control than provider-managed keys.

Role-Based Access Controls and SSO

Not every staff member needs access to every client’s records. HIPAA’s Minimum Necessary Standard expects firms to limit PHI access to people who need it for their job. Look for role-based permissions, SSO integration, and multi-factor authentication as table stakes.

Audit Logging

The Security Rule requires the ability to reconstruct who did what with PHI. AI tools should log every prompt, model version, user, timestamp, and output so firms can respond to audits and investigate incidents.

Data Handling and Retention

This is the biggest gap in consumer AI products. Many free and prosumer tools use inputs to train their models, which means PHI submitted in a prompt can end up in a training dataset. Enterprise HIPAA-eligible tools should guarantee that prompts and outputs are not used for training, that data is stored in specific geographic regions, and that retention periods are configurable.

Subprocessor Transparency

Most AI platforms rely on third parties (cloud hosts, model providers, vector databases). The BAA should cover those subprocessors, or they should have their own BAAs in the chain. Ask for the list in writing.

Top HIPAA-Compliant AI Tools by Category

We’ve grouped these by what they actually do, since “AI tool” covers everything from a full case file platform to a single-purpose transcription service.

1. DocuLex (Litigation Document Automation and Medical Records)

DocuLex is the platform we built at our firm for civil litigation, with a heavy focus on personal injury. It sits in the evidence management and document automation category and drafts active work product from case data, including pleadings, demand letters, medical chronologies, and discovery responses. Our legal document automation software handles case files, pleadings, discovery, and medical records in a single system.

What it does:

- Processes medical records visit by visit and generates chronologies, patient visit summaries, and billing summaries

- Drafts pleadings, correspondence, discovery responses, and demand letters from case data

- Provides a legal AI chatbot that answers case-specific questions using the firm’s own case file

- Maintains a centralized, searchable case database with vector embeddings for fast retrieval

HIPAA posture:

- Built on AWS with SSE-KMS encryption

- Business Associate Agreements in place with model providers

- Designed so medical data is not retained by AI model providers after analysis

- Audit logging on every AI interaction

Best for: Personal injury firms handling medical records at scale, civil litigators who want a single platform for case files and drafting, and solo practitioners who need associate-attorney-level output. See how we handle AI medical records processing and our broader data security framework for the details.

2. ChatGPT Enterprise and ChatGPT for Healthcare (OpenAI)

OpenAI’s enterprise tiers are HIPAA-eligible for customers who sign a BAA. Standard ChatGPT (Free, Plus, Team) is not covered and should not receive PHI under any circumstances.

HIPAA posture:

- BAA available for Enterprise and healthcare-tier customers

- Prompts and outputs not used for training on enterprise tiers

- SSO, SCIM, and admin controls for firm-wide management

Best for: General drafting, research, and summarization where the firm already has OpenAI infrastructure. OpenAI publishes its enterprise data handling terms on its enterprise privacy page.

3. Microsoft Azure OpenAI and Copilot

Azure OpenAI Service is HIPAA-eligible under Microsoft’s umbrella BAA. Customer prompts and data are not used to train OpenAI models when run through Azure.

HIPAA posture:

- Covered under Microsoft’s standard BAA

- Customer data not used for model training

- Customer-managed keys and region pinning available

- Copilot for Microsoft 365 HIPAA-eligible when configured through an enterprise agreement

Best for: Firms already running on Microsoft 365 that want AI embedded in Word, Outlook, and Teams. Microsoft maintains coverage scope on its HIPAA compliance page.

4. Google Vertex AI and Gemini Enterprise

Google Cloud covers Vertex AI and Gemini Enterprise under its HIPAA BAA. Law firms using Google Workspace for Enterprise can extend that coverage into AI workflows.

HIPAA posture:

- HIPAA BAA covers Vertex AI and Gemini Enterprise

- Encryption at rest and in transit by default

- Region pinning and customer-managed encryption keys available

Best for: Google Workspace firms and technical teams building custom AI pipelines. Google publishes its covered services on the Google Cloud HIPAA page.

5. Amazon Bedrock

Bedrock gives firms access to foundation models (Claude, Llama, Titan, Mistral) through AWS, which means it can inherit AWS’s HIPAA eligibility once a BAA is in place.

HIPAA posture:

- HIPAA-eligible service

- BAA available through AWS Compliance

- AWS KMS encryption and VPC isolation

- Prompts and responses not used to train models

Best for: Firms with technical resources that want to build custom AI workflows on AWS. The AWS Bedrock security page covers compliance scope in detail.

6. Anthropic Claude for Enterprise and Healthcare

Anthropic has expanded Claude’s availability to regulated industries, including a Claude for Healthcare program with HIPAA-ready features and BAAs for qualifying customers.

HIPAA posture:

- BAAs available for qualifying enterprise and healthcare customers

- Customer data not used for training on enterprise tiers

- Strong performance on long-context legal and medical documents

Best for: Firms that want long-context capabilities for deposition transcripts and voluminous medical record sets. Anthropic details the program in its healthcare and life sciences announcement.

7. Sonix (Transcription)

Sonix offers AI transcription with healthcare and legal vocabulary support, and it markets HIPAA-compliant processing on qualifying plans.

HIPAA posture:

- BAA available on higher-tier plans

- Encryption in transit and at rest

- Medical and legal terminology models

Best for: Deposition transcripts, medical dictations, recorded statements, and IME recordings.

8. Rev Enterprise (Transcription)

Rev offers a HIPAA-specific enterprise plan that includes a BAA. The plan restricts data handling and supports AI-only transcription (no human reviewer access to PHI) for Privacy Rule compliance.

HIPAA posture:

- HIPAA Enterprise plan with BAA

- AI-only processing option available

- Encryption and limited retention

Best for: Firms that already use Rev and need to upgrade for PHI-containing audio.

9. Otter.ai Enterprise (Transcription)

Otter.ai added HIPAA support to its Enterprise plan, which allows meeting and client-call transcription for firms handling PHI.

HIPAA posture:

- HIPAA-compliant Enterprise plan with BAA

- Admin controls and SSO

- Encryption and retention controls

Best for: Client intake calls, internal case strategy meetings, and recorded statements where an always-on transcription tool is useful.

HIPAA-Compliant AI Tools Comparison

| Tool | Category | BAA Available | Best Use Case |

| DocuLex | Litigation document automation and medical records | Yes | PI firms drafting pleadings, demands, and medical chronologies |

| ChatGPT Enterprise | General-purpose LLM | Yes (Enterprise tier) | General research and drafting |

| Azure OpenAI | Cloud AI platform | Yes | Microsoft 365 firms integrating AI into docs |

| Google Vertex AI | Cloud AI platform | Yes | Google Workspace firms and custom AI builds |

| Amazon Bedrock | Cloud AI platform | Yes | Custom AI workflows on AWS |

| Anthropic Claude | General-purpose LLM | Yes (Enterprise) | Long-context document and record analysis |

| Sonix | Transcription | Yes | Deposition and medical dictation |

| Rev Enterprise | Transcription | Yes | High-volume audio transcription |

| Otter.ai Enterprise | Meeting transcription | Yes | Client calls and team meetings |

How to Evaluate a HIPAA-Compliant AI Tool Before You Sign

Before a contract goes out, work through this checklist with the vendor:

- Will they sign a BAA in writing? If the BAA requires an enterprise contract minimum your firm cannot hit, the tool is not realistic.

- Does the BAA cover all subprocessors? Ask for the list. Cloud hosts, model providers, vector databases, and embedding services should all be covered.

- Is PHI used for training? The answer should be a clear no, in writing.

- Where is data stored? U.S.-hosted is standard. Confirm region options if your state has residency requirements.

- Can you control retention? Look for zero-retention options, configurable retention windows, and on-demand deletion.

- What logging is available? You should be able to reconstruct who accessed what PHI and when.

- Is encryption customer-managed? Customer-managed keys give firms stronger control than provider-managed keys.

- What’s the breach response timeline? Breach notification windows should be specified in the BAA.

Common HIPAA Mistakes Law Firms Make With AI

We see a handful of avoidable errors repeatedly.

Using consumer-tier AI with PHI. Free ChatGPT, free Gemini, and personal Copilot are not HIPAA-compliant. Pasting a client’s medical summary into them counts as an unauthorized disclosure, even if the firm pays for a different enterprise seat on an unrelated tool.

Signing the BAA and stopping there. Signing a BAA is the entry requirement. The firm still has to configure access controls, enable audit logging, restrict PHI to authorized users, and train staff on what not to input.

Assuming the cloud provider’s BAA covers every AI feature. Not every service under Microsoft, Google, or AWS is HIPAA-eligible. Check the covered services list before enabling a new AI feature on an existing contract.

Overlooking subprocessors. A vendor may have a solid BAA, but if it passes PHI to a model provider that hasn’t signed one, the chain breaks and the firm is exposed.

Retaining PHI in prompt logs. Prompt history can become a liability if it sits in debug storage. Work with vendors that minimize prompt retention and give you deletion controls.

Frequently Asked Questions

Is ChatGPT HIPAA-compliant for law firms?

Standard ChatGPT (Free, Plus, Team) is not HIPAA-compliant. ChatGPT Enterprise and ChatGPT for Healthcare are HIPAA-eligible once a BAA is signed with OpenAI.

Do law firms need a BAA with an AI vendor?

Yes. If an AI tool will process any protected health information, HIPAA requires a signed Business Associate Agreement with the vendor.

What happens if a law firm uses a non-compliant AI tool with PHI?

It counts as an unauthorized disclosure under HIPAA. Consequences can include HHS civil penalties, state bar complaints, malpractice exposure, and loss of client and referral trust.

Is HIPAA compliance enough for legal AI tools?

HIPAA covers medical data handling. For legal work, firms should also look for SOC 2 Type II attestation, subprocessor transparency, and evidence that the tool is designed for attorney-client privilege and legal workflows specifically.

Can AI transcription services be used for depositions involving medical records?

Yes, if the service has a HIPAA-compliant enterprise plan with a signed BAA. Sonix, Rev Enterprise, and Otter.ai Enterprise each offer HIPAA tiers for this use case.

Choosing the Right HIPAA-Compliant AI Stack for Your Firm

The right stack depends on practice area. A PI firm handling medical record chronologies, demand letters, and pleadings needs different tools than a defense firm running commercial litigation. In either case, look for purpose-built tools over general-purpose chatbots for the work that touches PHI most often. The general-purpose LLMs are useful for research and drafting, but the heavy lifting on medical records and litigation documents is usually better handled by a platform designed for it.

If your firm is evaluating AI for medical records processing, demand letter drafting, or litigation document automation, we built DocuLex for exactly this work. Join the DocuLex waitlist to see how the platform fits your practice.